Introduction

The European Union (EU) has recently introduced the AI Act, a groundbreaking legal framework aimed at regulating artificial intelligence. This regulation, adopted by the EU’s 27 member states on March 13, 2024, marks a significant step towards ensuring the safe and ethical use of AI technologies. The AI Act, first proposed in April 2021, builds on previous EU initiatives like the 2018 Coordinated Plan on AI and the 2020 White Paper on AI. The Act aims to promote human-centred and trustworthy AI while safeguarding health, safety, and fundamental rights.

Key Concepts and Definitions

The AI Act applies to all providers and deployers of AI systems within the EU market, regardless of their location. This broad scope means that any AI product whose output can be received in the EU must comply with the Act. For the healthcare sector, this regulation is particularly crucial, as existing laws like the Medical Device Regulation (MDR) and the In Vitro Diagnostic Medical Device Regulation (IVDR) do not explicitly cover medical AI applications.

Risk-Based Approach and Innovation

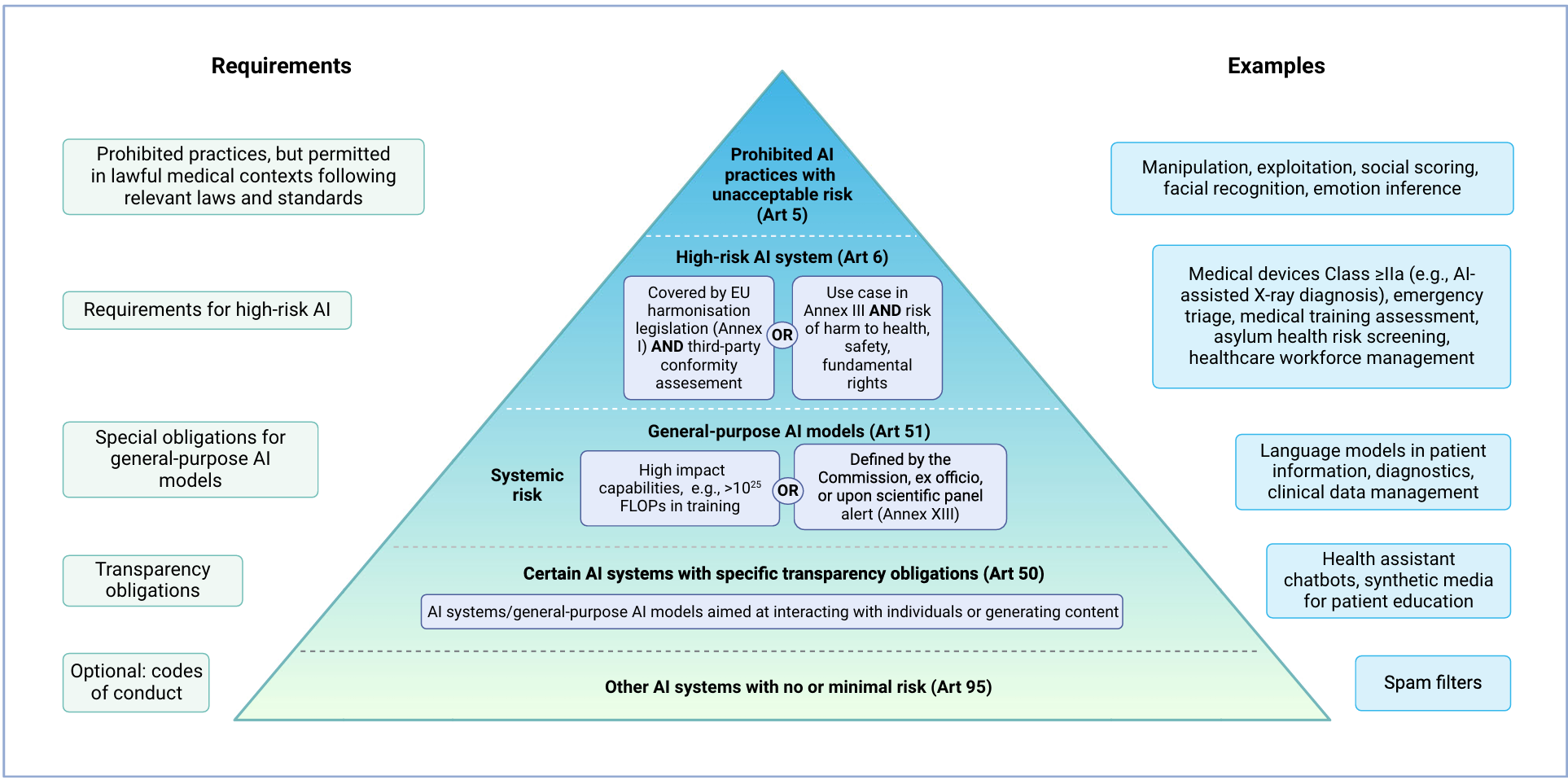

The AI Act follows a risk-based approach, prohibiting certain AI practices deemed to pose unacceptable risks. High-risk AI systems and general-purpose AI (GPAI) models must adhere to stringent requirements. The Act encourages innovation by exempting AI systems developed for scientific research or personal use from its regulations. However, open-source AI systems are subject to the same rules as commercial ones if they are monetised.

Prohibited AI Practices

The AI Act bans specific AI practices due to their high-risk nature. These include manipulative and deceptive practices, biometric categorisation, social scoring, and real-time remote biometric identification. Non-compliance can result in severe penalties, including fines of up to 35 million EUR or 7% of annual turnover. However, exceptions exist for medical uses, such as psychological treatment and physical rehabilitation, provided they comply with applicable laws and medical standards.

High-Risk AI Systems

AI systems are classified as high-risk under two conditions. First, if they are safety components of products covered by EU harmonisation legislation, like the MDR or IVDR. Second, if they pose significant risks to health, safety, or fundamental rights. Examples in healthcare include emotion recognition systems and emergency patient triage systems. High-risk AI systems must meet specific requirements, including third-party conformity assessments.

General-Purpose AI Models (GPAI)

GPAI models can be used as stand-alone high-risk AI systems or as components of other AI systems. These models must always fulfil certain requirements due to their capabilities for multiple downstream tasks. GPAI models are classified based on their systemic risks, determined by their computational power and potential impact on public health, safety, and fundamental rights. Additional requirements apply to GPAI models presenting systemic risks, such as model evaluation and cybersecurity measures.

Transparency Obligations

All AI models, including GPAI, must meet transparency obligations if they interact with natural persons or generate content. In healthcare, this applies to virtual health assistants and chatbots. Users must be informed about the AI system, and the output should be machine-readable and recognisable as artificially generated.

Implications for Healthcare

The AI Act’s impact on healthcare AI applications is significant. Most commercial AI-enabled medical devices, particularly those in radiology, will be classified as high-risk and must comply with the Act’s requirements. The regulation’s intersection with existing laws like the MDR and IVDR may complicate the authorisation process, increasing regulatory complexity and costs. Small and medium-sized enterprises may face additional challenges due to the regulatory burden.

Conclusion

The AI Act represents a comprehensive legal framework aimed at ensuring the safe and ethical use of AI across various industries, including healthcare. While the regulation promotes innovation and competitiveness, it also imposes stringent requirements on high-risk AI systems and GPAI models. The Act’s implementation will likely increase regulatory complexity and costs for medical AI products, impacting their market position. However, the AI Act also sets high standards for AI development and use, potentially influencing global regulations.

Recent Posts

Prioritizing a Comprehensive Medtech Market Access Strategy Through Stakeholder Engagement

Potential for Advancements in HIV Weekly Treatment Options